Submitted by Joel Hughes

Author:

Original: Dynamic Ecology

There is a persistent myth (some might even call it a zombie idea) that getting tenure in academia requires working 80 hours a week. There’s even a joke along the lines of “The great thing about academia is the flexibility. You can work whatever 80 hours a week you want!” The idea that you need to work 80 hours a week in order to publish or get grants or tenure is simply wrong. Moreover, I think it’s damaging: I hear routinely from younger folks (often women) who are seriously considering leaving academia primarily because they think that a tenure track position will require working so much that they wouldn’t be able to have any life outside work (including raising a family)*. So, this is my attempt at slaying the zombie idea that succeeding in academia requires working as much as an investment banker**.

This post was inspired by this comment from dinoverm on last Friday’s linkfest post, where I linked to the “7 Year Postdoc” article, even though I had already linked to it earlier, because I found that it kept coming up in conversations with grad students, postdocs, and new faculty. In linking to it on Friday, I said, “I really like the idea of deciding what you are okay with doing (maybe you aren’t willing to move anywhere in the country/world, or you really want to do a particular type of research but aren’t sure how “tenurable” that line of work will be), and then using that to set boundaries on what you do as a faculty member. I think this perspective is really valuable for people who are considering stepping off the tenure track primarily because they’re worried about work-life balance or quality of life. Obviously getting tenure will require working hard, but the lore that it requires 80 hour work weeks and ignoring one’s non-work priorities is simply wrong, and I think this perspective is a good one for thinking about how to balance things.” That led to discussion in the comments on how it is rare for someone to “admit” to not working 80 hours a week. This is something that we’ve discussed in the comments before. (Thanks to Jeremy for figuring out where!) You should go read this entire comment from Brian, because it’s great. (The rest of that comment thread is worth reading, too. There are lots of good thoughts there about parenting and academia, in particular.) But, just to quote part of it here:

“I think it is time to start calling BS on such posturing. Nobody works 80 hours a week regularly (as she claimed in one post). It actually is physically impossible* over the long run. I used to be a consultant where you billed every hour. We were a bunch of type As in an environment where we were strongly encouraged to work long hours (indeed it’s how the company made money by paying us a fixed salary and billing hours worked). I think I exceeded 80 hours once in 9 years, and only rarely and only in times of crisis exceeded 60. The official company expectation was 45 (although of course if you wanted a good review you might aim to be a tad above rather than below). We don’t record hours in academia, but I know what 80 looks and I know what 60 and 50 and 40 look like because I measured it so carefully for 450 weeks and I haven’t seen anything truly different here. Most young profs are in the 40-60 hour range is my belief with most in the lower half of that. And yes 50 hours plus rest of life feels crazy and insane. But stop saying it’s 80 and making everybody else feel guilty they’re not measuring up. The game is incented to exaggerate how much you work, so believe those numbers other people throw out at your risk.”

<cutting lots of great thoughts that you really should go read>

“*Do the math on working 80 hours/week -112 waking hours – 14 hours/week eating/grooming/maintaining car house – 5 hours commuting = 83 hours and that is pretty sparse grooming and maintaining – e.g. no exercise – and nobody lives on 3 hours/week leisure time)”

Why does this myth persist? Probably it’s in part because, if you think everyone else is working 80 hours a week, it can seem risky to admit that you aren’t, since that could make you seem like a slacker.

But I think another important reason for the persistence of this myth is that people are bad at recognizing how much they actually work. Unlike Brian, most of us haven’t spent years tracking our exact hours worked, and so don’t have a realistic sense of what an 80 hour work week would really feel like. As a grad student and postdoc, I thought I worked really hard. But then I made myself start logging hours (sort of like I was keeping track of billable hours, though I was simply doing it out of curiosity). I was astonished at how little I actually worked. It was something like 6 hours of actual work a day. I never would have guessed it was that low. I hadn’t realized how much time I was spending on those seemingly little breaks between projects. I used to count a sample, then go read an article on Slate, then go count another sample, then go read another article, etc. At the end of the day, if you’d asked what I’d done, I would have said I’d spent all day counting samples. But, in reality, I had probably only spent roughly half my day actually counting samples. I found this exercise really valuable and eye-opening. I think it probably did more to make me more efficient in how I work than anything else. And working efficiently frees up lots of time for other things (including spending time with my kids). I’ve recommended this to people who were struggling to keep up with tasks they needed to accomplish, and also have recommended keeping track of basic categories (maybe research, teaching, and service) when doing this accounting to see if the relative time devoted to those tasks seems reasonable.

So how much do I work? That has varied over the years, not surprisingly. When I started my first faculty position, there were times when I felt like I was working as hard as I possibly could, and I started to wonder if I was working 80 hours a week. So, I tallied the hours. It was about 60 hours/week. And that was during a really time-intensive experiment, and was a relatively short-term thing. (I’m not sure, but that might be similar to the amount I worked during the peak parts of field season in grad school.) I could not have maintained that schedule over several months without burning out, regardless of whether or not I had kids. Right now, I’d say I typically work 40-50 hours a week. I am in my office from 9-5, and I work as hard as I can during that time. I usually can get some work done after the kids go to bed, but there’s also prepping bottles to send to daycare the next day, doing dishes, etc., so I definitely have less evening work time than I used to. And I usually get a few hours total on the weekend to work, but that’s variable.

Again, I think the key is being efficient. This article has an interesting summary of history and research behind the 40 hour work week. It argues (with studies to back up the argument) that, after an 8 hour work day, people are pretty ineffective:

“What these studies showed, over and over, was that industrial workers have eight good, reliable hours a day in them. On average, you get no more widgets out of a 10-hour day than you do out of an eight-hour day. Likewise, the overall output for the work week will be exactly the same at the end of six days as it would be after five days. So paying hourly workers to stick around once they’ve put in their weekly 40 is basically nothing more than a stupid and abusive way to burn up profits. Let ‘em go home, rest up and come back on Monday. It’s better for everybody.”

That article points out that there is an exception – occasionally, you can increase productivity (though not by 50%) by going up to a 60 hour work week. But, this only works for a short term. This matches what I’ve found in my own work (see previous paragraph) and also seems to match with the quote from Brian above.

So, please, do not think that you need to work 80 hours a week in academia. If you are working that many hours, you are probably not being efficient. (I’m sure there are exceptional individuals who can work that long and still be efficient, but they are surely not the norm.) So, work hard for 40-50 hours a week (maybe 60 during exceptional times), and then use the rest of the time for whatever you like***. And, please, please, please, stop perpetuating the myth that academics need to work 80 hours a week.

* People who are regular readers of this blog will know that I don’t think there’s anything wrong with non-academic careers. I simply want people to make their decisions based on accurate information, and don’t want someone choosing to step off the tenure track primarily because of the myth that it requires 80 hour work weeks.

** As it turns out, investment bankers are being encouraged to work less, though “less” is still a whole lot by most standards. (Here’s another story on the same topic.)

*** I encourage exercise as one way to use some of that time. (Perhaps that’s not a surprise, given that I have a treadmill desk.) In talking with other academics, it seems that exercise is often one of the first things to go when things get busy. I enjoyed this post by Dr. Isis, which explains why she decided to start prioritizing exercise again. (The comments on that post are good, too.) When I made myself mentally switch from saying “I don’t have time to exercise” to “I am choosing not to prioritize exercise”, I suddenly got much better at working exercise into my schedule.

Author:

Original: Chronicle of Higher Education

This fall I’m serving as the designated coach for doctoral students in my department who are on the academic job market. They’re a talented group, with impressive skills, hopes, and dreams. I’m grateful to be guiding them, as they put their best selves before search committees. However, one part of the work is not all that pleasant: I also need to ready them to face mass rejection.

Regardless of any happy outcomes that may await, they’re about to endure what may be their first experience of large-scale professional rebuff. Before, during, and after college, they sought part-time and full-time jobs and applied to graduate schools. They didn’t get hired, or they didn’t get in to some of those schools, naturally. But now they’re putting themselves in line for 40, 50, or more rejections within the space of weeks and months — on the heels of a grueling, humbling few years of dissertation writing.

I feel their pain, to some extent. Those of us on the job market a decade or more ago got our mass rejections in thin envelopes or via email in May or June, after we’d had a few closer looks and maybe even a job offer. Today’s candidates learn they’re out of the running for coveted jobs much sooner, and secondhand, by confronting another candidate’s report of an interview or an offer on the Academic Job Wiki.

That then-and-now difference got me thinking about how we teach graduate students to face academic rejection. Of course, we largely don’t. Rejection is something you’re supposed to learn by experience, and then keep entirely quiet about. Among academics, the scientists seem to handle rejection best: They list on their CVs the grants they applied for but didn’t get — as if to say, “Hey, give me credit for sticking my neck out on this unfunded proposal. You better bet I’ll try again.” Humanists — my people — hide our rejections from our CVs as skillfully as we can. Entirely, if possible.

That’s a shame. It’s important for senior scholars to communicate to those just starting out that even successful professors face considerable rejection. The sheer scope of it over the course of a career may be stunning to a newcomer. I began to think of my history of rejection as my shadow CV — the one I’d have if I’d recorded the highs and lows of my professional life, rather than its highs alone.

More of us should make public our shadow CVs. In the spirit of sharing, I include mine here in its rough outline, using my best guesses, not mathematical formulas. (I didn’t actually keep a shadow CV, despite predictable jokes I may have made in the past about wallpapering my bathroom with rejection letters.)

I made many failed attempts at getting my work in print, while learning how to write for new audiences and building relationships with editors. Let’s call this rejection factor 4x, on average, although many of those rejections were not of pieces that eventually saw print but those that never did.

In total, these estimates suggest I’ve received in the ballpark of 1,000 rejections over two decades. That’s 50 a year, or about one a week. People in sales or creative writing may scoff at those numbers, but most of my rejections came in the first 10 years of my academic career, when I was searching intensely for a tenure-track job. Very few came during the summer, when academic-response rates slow to a crawl. I remember months when every envelope and every other email seemed to hold a blow to the ego. My experience was not unusual. Unfortunately, a multiyear job search is, if anything, more common now for would-be academics than when I was on the market.

Most of us get better at handling rejection, although personally, it can still knock the wind out of me. Usually in those moments, I recall something a graduate-school professor once said after I railed at, and — much to my embarrassment — shed a few tears over a difficult rejection: “Go ahead,” he said. “Let it make you angry. Then use your anger to make yourself work harder.”

It sounds so simple. Whether any single rejection is fair or unfair doesn’t ultimately matter. What matters is what you do next. You could let rejection crush you. Or you could let it motivate you to respond in creative, harder-working, smarter-working ways. (I’m convinced, though, that rejection is particularly tough to take in academe because so much of our work is mind work, closely tied to our own identities and sense of self-worth.)

A CV is a life story in which just the good things are recorded, yet sometimes I look at it and see there what others cannot: the places I haven’t been, the journals where my work wasn’t accepted, the times a project wasn’t funded, the ways my ideas were judged inadequate. I’ve started to imagine my CV as a record of both highlight-reel wins and between-the-lines losses. If you’re lucky, you will, like me, also one day come to recognize the places where the losses — as painful as they were at the time — led to unexpectedly positive things. Slammed doors, it turns out, may later become opened ones.

When I was meeting with my department’s academic-job seekers recently, one of them asked me about the last time I was rejected.

“My last rejection was one week ago,” I admitted to them, feeling uncomfortably like someone introducing myself at an AA meeting. “I got two rejections, in fact. One was really, really hard to accept, and, I think, wrong. But I’ll take it for what it’s worth and try again.”

Increasingly, I see rejection as a necessary part of every stage of an academic career. I remind myself that the fact that I’m still facing rejection is evidence that I’m still in the game at a level where I should be playing. I’m continuing to hone my skills and strive for better opportunities — continuing to build both my CV and my shadow CV. Each version is necessary as we seek to advance our research, teaching, and service, the activities to which some of us — and I wish there were many more of us — have the good fortune to devote our professional lives.

Author: Jake Jackson

Original: PhDisabled

Content note: This post involves frank discussion of the experience of depression and includes reference to the recent suicide by Robin Williams.

A few months ago, the night before a conference in which I was participating, I let slip to the Chair of a philosophy department that I often have trouble sleeping. He asked why.

Realizing I may have revealed more than is perhaps savory for having just met, I stammered: “Why, I’m an existentialist!”

The catchphrase fit. After all, the next day I was presenting a paper that dealt with Kierkegaard and Nietzsche on (un)certainty and faith. He then laughed, made a joke of it himself, but gave a knowing-yet-compassionate look.

I was safe. Even in the form of a joke, this was perhaps one of only two instances where I have openly implied the presence of my lifelong depression to a tenured faculty member in my field without regretting it or worrying about how it might affect their perception of me.

This post seeks to question the way that academic philosophy perceives depression. I am not writing this with statistics or numbers, but instead from the subjective phenomenological perspective of someone who has depression and who works in – and aspires to build a career in – academic philosophy.

I seek not to grind an axe against any particular persons or institutions, but instead want to focus on the sort of social context confronted by those with depression, based on my lived experiences.

Depression is an alienating illness, especially when coupled with anxiety, as happens frequently. In my experience in academic philosophy circles, that alienation is amplified since mental health is not spoken of as a real entity. It is instead catalogued and discriminated by logic and reason as something other, an outside factor. The depressed are outsiders.

Depression is treated with a deafening silence, both inside of the academy and outside in society at large.

There is a social unseemliness to discussions of depression. Mental illness is a two-fold problem, private and yet public: private in that it is often suffered alone, public in that its effects reach out further than just the atomized individual.

Social behavior is socially determined, or at least, prescribed. This naturally turns the personal experiences and troubles of every private individual into a public concern. When someone admits to experiencing depression, whether chronic or a phase, this fact becomes a public concern. We look to role models, finding only a public-shaming of role models who suffer mental illness. Public figures who admit to mental illness are asked rushed questions on the intimate details of their struggle. Everyone has an opinion on mental illness, and most of them are not only wrong but directly harmful to both individuals who suffer silently and society at large.

We are not beyond a society that sees mental illness as a stain within one’s soul, some present-age demons who continue to torment mortals. Mental illness still stands as something to be ashamed of because we want to believe in karma or something similar. We want to believe that the ills that we suffer are somehow dependent upon something we deserve.

Those of us who are more scientifically inclined want to believe that we can redeem and fix mental illness, as if it were machinery. If we could only figure out the brain, then we believe that we could “normalize” it, or better, “cure” it.

We wish for so much that it blots out the actual condition. All this wishing and hoping is a flight from the actual day-to-day concerns of depression. As Nietzsche states “Hope is the worst of all evils, for it prolongs the suffering of people.”

Anything that disturbs a social norm makes everyone uncomfortable or at the very least brings up strong opinions. The recent suicide of Robin Williams has shown us yet again that the public doesn’t like talking about depression, certainly not in honest terms. Any suicide, but especially one of a public figure, becomes hyper-moralized. Now is the time for people to condemn Williams with words such as “cowardly” or “selfish” for taking his own life, but then also “brave” for struggling with his depression for so long. Other foolish moralists will say that depression is a divine gift as it comes along with comedic ability, hand in hand.

These moral arguments come out again each time in vain. They are in vain since they try to rationalize the brutally irrational. The overbearing social stigma of depression makes a lot of sense at times. It is very uncomfortable to think that one can be one’s own worst enemy, that the mind can so pessimistically stand against reason or external pleasures. It is, indeed, unseemly.

However, it is this very unseemliness that is the reason that depression should be more openly discussed. It is constantly suppressed socially into restrictive norms that only exponentially increase depression’s own horrid effects of alienation and resentment.

Having high hopes for a radical social change regarding mental health is perhaps going to be nothing but a disappointment. This, however, does not mean that one should give up hope for change and radical action.

I think it should be the job for philosophy to demand that society’s discourse regarding mental health gets less awful. Good philosophy should offer alternatives for social problems, or at the very least scold the often careless ideologies that cause social problems.

But first, academic philosophy itself needs to turn its gaze to depression and how it is treated within its own ranks. We treat it with silence. No one finds it polite to speak on it, unless talking about the personal lives of the dead or as a dry systematic theory. We philosophers prefer to hold depression at arm’s length, even though it often lives so close within our chests as a tightening knot limiting our actions.

Depression is brutally irrational. It does not care for one’s successes, relationships, or anything else that is valued for a so-called good life. No matter how much one moves towards eudaemonia in one’s life, depression is there, lurking. As Winston Churchill described it, depression follows one around like a big black dog ever obedient to its master.

Depression drives me to gaze into abysses.

My philosophical interests rest at the intersection of ethics, phenomenology, and existentialism. I work heavily in Nietzsche and late Husserl, but have recently expanded into working on Kierkegaard and Sartre. None of these historical figures are light reading in any sense of the term. Nietzsche was clearly the king of the abyss and suffered a horrifying debilitating illness which destroyed his mind and his body. Towards the end of his life, Husserl lost a son to the First World War and witnessed his rights dissolve as a Jewish intellectual in Germany. Kierkegaard struggled with his faith and anxiety throughout his life’s work. Sartre fought in the Second World War in the French Resistance and was notoriously bitter in his personal relationships. None of these figures are happy role models. A certain sadness produces good work, it would seem. That same certain sadness reflects on the page. I could, perhaps, “lighten up” and go towards lighter fare, work on thinkers who don’t reach such sad depths, but I don’t find much interest in such things. I instead stay the course in developing an ethics that looks right into horrible things that people do.

My depression drives me towards a weighted sense of responsibility and is the reason I work in philosophy and ethics.

But we do not want to talk about it in the Academy. Despair and anxiety are seen as more suitable on a dissection table in a sterile setting. Even if depression is what drives us towards prolific writing, we stay quiet on its daily presence. We speak instead of depression as the motive for past generations, holding off from any honesty about ourselves and our motivations today.

In my MA program, I had several interactions with other graduate students in philosophy with different approaches towards depression, but universally, it is treated as a shameful subject. Many act horribly insecure about their mental health, either secretive or, worse, bullying others who show any sign of depression, perceiving it like a weakness and those who evince it as prey.

I did speak with colleagues about my depression and anxiety. It hardly went well. One especially insecure classmate spoke with a nostalgia for the days when depression was called melancholia. In other words, he pined for the ‘good old days’ of misdiagnosis and mistreatment at the hands of deliberately ableist pseudoscience. Another former classmate who studies the intersections of psychoanalysis and philosophy quite hypocritically mocks anyone who is honest about their feelings. So moving forward, I buried mine.

Consequently, I let my depression take too much hold over me during this program. Things got particularly low when I faced a major setback in my studies at the very same time that I had a dramatic falling-out with some family members. My worsening depression alienated me from friends and colleagues. It fed itself. At the insistence of my spouse, I finally sought professional help which allowed me to put my depression and anxiety into a much more manageable condition. Even so, I stayed ashamed of my condition throughout my MA program. I avoided talking to anyone in my department about anything at all, let alone my depression.

At the point where I began antidepressants and laid off of drinking for a couple weeks to regulate, one of my classmates noticed. I mentioned that I was on a new medication; I did not mention what. He too gave that knowing and understanding look.

Both of us looked at each other knowing that we were struggling with the same condition, but saying nothing. Never did we say a thing about it.

There’s a certain intersubjective co-understanding here: the depressed recognize the depressed easily. But ashamed, we say nothing in fear of outing ourselves, admitting anything in honesty. Perhaps it was the program I was in, but insecurities ratcheted up and became more secret, more insecure and ready to explode.

Instead, I spoke to others outside of my department through internet communities that understand and employ an important sense of honesty regarding disability. It just wasn’t ‘proper’ to talk to those who I knew in my program.

All of this shaming stigma needs to stop. Academia, academic philosophy particularly, can get bad enough as a stressful environment. All of our insecurities already rest within the Ivory Tower itself, let alone even trying to stay within it. Impostor syndrome is rife, yet shame in mental illness is pervasive. At the very least, all this mental illness-shaming seems like a waste of time and energy. At the very worst, it creates a subculture of alienated, disillusioned individuals who cannot trust one another, or their own attempts to see the strength inherent in the hard work they invest in living – surviving – with depression.

Soon after the First World War and losing his son, Husserl wrote to Arnold Metzger that:

“You must have sensed that this ethos is genuine, because my writings, just as yours, are born out of need, out of an immense psychological need, out of a complete collapse in which the only hope is an entirely new life, a desperate, unyielding resolution to begin from the beginning and to go forth in radical honesty, come what may.”

Mental illness must be treated with a collective commitment to radical honesty that comes from recognizing our shared responsibility to ourselves and each other.

We academic philosophers must pick up this radical honesty when it comes to mental illness before collapse.

We need to look into our motivations more critically in order to live more ethically together. If we are to claim ourselves as a higher critical institution of people, we must open the discourse on mental health. This is not a call for sympathy, but for honesty among all parties involved in academia. Now, as I start a new PhD program, I am hoping to overcome oppressive silence with radical honesty, staying open before others and combating shaming stigma whenever I find it.

Author: Richard Van Noorden

Original: Excerpts reprinted by permission from Macmillan Publishers Ltd: Nature 512,126-129, copyright (2014)

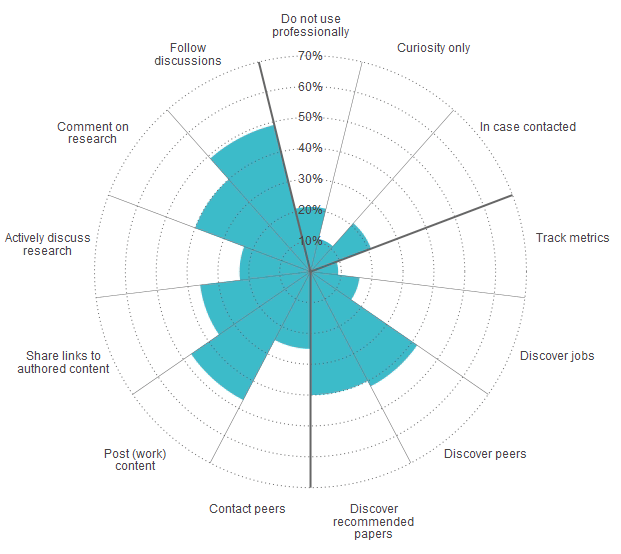

Why scholars use social media (Twitter)

Adapted by permission from Macmillan Publishers Ltd: Nature 512,126–129, copyright (2014)

“A few years ago, the idea that millions of scholars would rush to join one giant academic social network seemed dead in the water. The list of failed efforts to launch a ‘Facebook for science’ included Scientist Solutions, SciLinks, Epernicus, 2collab and Nature Network (run by the company that publishes Nature). Some observers speculated that this was because scientists were wary of sharing data, papers and comments online — or if they did want to share, they would prefer do it on their own terms, rather than through a privately owned site.

But it seems that those earlier efforts were ahead of their time —or maybe they were simply doing it wrong. Today, ResearchGate is just one of several academic social networks going viral. San Francisco-based competitor Academia.edu says that it has 11 million users. “The goal of the company is to rebuild science publishing from the ground up,” declares chief executive Richard Price, who studied philosophy at the University of Oxford, UK, before he founded Academia.edu in 2008, and has already raised $17.7 million from venture capitalists. A third site, London-based Mendeley, claims 3.1 million members. It was originally launched as software for managing and storing documents, but it encourages private and public social networking. The firm was snapped up in 2013 by Amsterdam-based publishing giant Elsevier for a reported £45 million (US$76 million).”

“Despite the excitement and investment, it is far from clear how much of the activity on these sites involves productive engagement, and how much is just passing curiosity — or a desire to access papers shared by other users that they might otherwise have to pay for. . . . In an effort to get past the hype and explore what is really happening, Nature e-mailed tens of thousands of researchers in May to ask how they use social networks and other popular profile-hosting or search services, and received more than 3,500 responses from 95 different countries.”

For study infographics, see below. For more on the survey findings and to read the complete Nature article: http://www.nature.com/news/online-collaboration-scientists-and-the-social-network-1.15711.

Author: Melonie Fullick

Original: University Affairs | Speculative Diction

Recently University Affairs published an interview with Kevin Haggerty and Aaron Doyle, two Canadian professors who have written a book of advice for graduate students. The book’s gimmick, if you want to call it that, is that it’s presented as a guide to failing—an anti-guide, perhaps?—as evidenced by the title, 57 Ways to Screw up in Grad School: Perverse Professional Lessons for Graduate Students. According to Haggerty and Doyle, “students often make a series of predictable missteps that they could easily avoid if they only knew the informal rules and expectations of graduate school.” If only! And this book, we’re told, is designed to help solve that problem.

Dropping all sarcasm, the first thing I have to say is: really?

… grad students’ “failure” is somehow all about the mistakes they make? How many times do we have to take this apart before faculty giving this kind of “advice” start realizing how it sounds? Maybe this is just a part of the “joke” and I’m not getting it, but how long is it going to be before the irrational and erroneous assumption that student success is entirely about individuals and their intrinsic merit and skills, is displaced with a more realistic perspective?

Haggerty and Doyle have been promoting their book in the higher ed press for a couple of months now. While the University Affairs interview is relatively subdued, I want to bring your attention to their August 27th piece in Times Higher Education (THE), in which we were treated to a lively sample of just 10 of the ways grad students can ruin their own chances of academic success. Back when the article was first published I shared a series of critiques on Twitter; I’m going to risk boring you by repeating them here, because the book is receiving attention and there are some fundamental problems with the ideas that it reflects and reinforces.

At the outset, the authors explain how they’ve “concluded that a small group of students actually want to screw up. We do not know why. Maybe they are masochists or fear success.” This sort of set-up trivializes and dismisses serious problems; but things get worse from that point. Here are a few of the “screw ups” listed in the THE article, along with some of the criticisms they provoked from me and others:

I hope you’ll forgive me for not finding the topic of grad student “failure” an amusing one. Usually I like a good joke (especially at the expense of academe), but I just don’t see how it’s appropriate for this issue. The “light-hearted” approach is grating to me, and I wasn’t alone. Reactions to the article included: “horribly smug”; “their post is not amusing”; “I’m a Professor who has supervised dozens of PhDs and I disagree with almost all of what the authors said”; “this is ridiculous”; “clickbait, consumerism, classism”; “I had trouble getting past #1”; and “much could be turned around into ‘do your job, grad schools.’”

What’s even more frustrating is that almost every point made in the THE article could have been made in a helpful, critical and inclusive way, and simply wasn’t. In choosing this particular approach to “advice” the authors render their points not only unpalatable, but also condescendingly uncritical. Even if the advice is potentially of use, why put it in terms that are exclusionary to some students, and infantilizing to all? The authors make the argument they’re sharing tacit knowledge, thus doing us all a favour. But they also seem to be ridiculing students from not having this knowledge at the outset. The use of the word “guilty” (in their interview) just reinforces the feelings many students already experience when they discover something’s going wrong.

Haggerty and Doyle aren’t alone in their assumptions, and that’s why these kinds of articles and books represent a problem. They aren’t mere one-offs; as I’ve argued before (and no doubt you’re all sick of hearing it), it’s still too convenient for graduate programs and supervising faculty to dismiss students’ “failure” as a problem with selection of students, students’ lack of commitment, and/or a bad “fit”—an approach that shifts the blame away from problems of supervisory competence, appropriate social and academic support, and departmental climate and culture. That this perspective is espoused publicly by respected senior faculty members who not only supervise grad students but have also spent time as graduate chairs, shows how pervasive and influential it is in academe.

As always, I’m not trying to argue that students have no responsibility for their own success. What I’m responding to is the framing of this as a problem almost entirely in their hands. We already know (from research, in fact) that this is an inaccurate depiction, and that students’ experiences in graduate education are affected at least as much by the supervisor, department, and peer group—as well as by structural factors such as class, race, gender, and disability—as they are by individual merits and choices.

I’m aware that the book will provide more detail than a short post on THE, but because it’s the framing rather than the content that’s a problem, maybe “less is more.” You don’t need a book like this when the same or better advice is available from people who’ll give you a constructive and critical perspective on professionalization and the norms and values of academe—the latter having been taken for granted by Haggerty and Doyle. I recommend you check out those diverse perspectives instead—there are too many to list here, but a few online sources that spring to mind are Pat Thomson, The Thesis Whisperer, Conditionally Accepted, PhDisabled, Explorations of Style, Gradhacker, and also (for some background) the bibliography of research on doctoral education that I linked to above. You can also try #phdchat on Twitter, where you’ll find a wealth of resources.

Given the variety and quality of the research and resources available, surely at this stage there’s no excuse to reiterate the same old tired themes about irresponsible students and the silly mistakes they make. I only hope we can move beyond this in future debates about graduate education.

Author: Inger Mewburn

Original: The Thesis Whisperer

Two of my favourite people in the academic world are my friends Rachael Pitt (aka @thefellowette) and Nigel Palmer. Whenever we have a catch up, which is sadly rare, we have a fine old time talking shop over beer and chips (well lemonade in my case, but you get the picture).

Some time ago ago Rachael started calling us ‘The B Team’ because we were all appointed on a level B in the Australian university pay-scale system (academic Level B is not quite shit kicker entry level academia – that’s level A just in case you were wondering – but it’s pretty close). I always go home feeling a warm glow of collegiality after a B team talk, convinced that being an academic is the best job in the entire world. Rachael reckons that this positive glow is a result of the ‘circle of niceness’ we create just by being together and talking about ideas with honesty and openness.

Anyway, just after I announced my appointment as director of research training at ANU, the B team met to get our nerd on. As we ate chips we talked about my new job, the ageing academic workforce, research student retention rates. Then we got to gossiping — as you do.

All of us had a story or two to tell about academic colleagues who had been rude, dismissive, passive aggressive or even outright hostile to us in the workplace. We had encountered this behaviour from people at level C, D and E, further up in the academic pecking order, but agreed it was most depressing when our fellow level Bs acted like jerks.

As we talked we started to wonder: do you get further in academia if you are a jerk?

Jerks step on, belittle or otherwise sabotage their academic colleagues. The most common method is by criticising their opinions in public, at a conference or in a seminar and by trash talking them in private. Some ambitious sorts work to cut out others, whom they see as competitors, from opportunity. I’m sure it’s not just academics on the payroll who have to deal with this kind of jerky academic behaviour. On the feedback page to the Whisperer I occasionally get comments from PhD students who have found themselves on the receiving end — especially during seminar presentations.

I assume people act like jerks because they think they have something to gain, and maybe they are right.

In his best selling book ‘The No Asshole Rule’ Robert Sutton, a professor at Stanford University, has a lot to say on the topic of, well, assholes in the workplace. The book is erudite and amusing in equal measures and well worth reading especially for the final chapter where Sutton examines the advantages of being an asshole. He cites work by Teresa Amabile, who did a series of controlled experiments using fictitious book reviews. While the reviews themselves essentially made the same observations about the books, the tone in which the reviewers expressed their observations was tweaked to be either nice or nasty. What Amabile found was:

… negative or unkind people were seen as less likeable but more intelligent, competent and expert than those who expressed the the same messages in gentler ways

Huh.

This sentence made me think about the nasty cleverness that some academics display when they comment on student work in front of their peers. Displaying cleverness during PhD seminars and during talks at conferences is a way academics show off their scholarly prowess to each other, sometimes at the expense of the student. Cleverness is a form of currency in academia; or ‘cultural capital’ if you like. If other academics think you are clever they will listen to you more; you will be invited to speak at other institutions, to sit on panels and join important committees and boards. Appearing clever is a route to power and promotion. If performing like an asshole in a public forum creates the perverse impression that you are more clever than others who do not, there is a clear incentive to behave this way.

Sutton claims only a small percentage of people who act like assholes are actually sociopaths (he amusingly calls them ‘flaming assholes’) and talks about how asshole behaviour is contagious. He argues that it’s easy for asshole behaviour to become normalised in the workplace because, most of the time, the assholes are not called to account. So it’s possible that many academics are acting like assholes without even being aware of it.

How does it happen? The budding asshole has learned, perhaps subconsciously, that other people interrupt them less if they use stronger language. They get attention: more air time in panel discussions and at conferences. Other budding assholes will watch strong language being used and then imitate the behaviour. No one publicly objects to the language being used, even if the student is clearly upset, and nasty behaviour gets reinforced. As time goes on the culture progressively becomes more poisonous and gets transmitted to the students. Students who are upset by the behaviour of academic assholes are often counselled, often by their peers, that “this is how things are done around here” . Those who refuse to accept the culture are made to feel abnormal because, in a literal sense, they are – if being normal is to be an asshole.

Not all academic cultures are badly afflicted by assholery, but many are. I don’t know about you, but seen this way, some of the sicker academic cultures suddenly make much more sense. This theory might explain why senior academics are sometimes nicer and more generous to their colleagues than than those lower in the pecking order. If asshole behaviour is a route to power, those who already have positions of power in the hierarchy and are widely acknowledged to be clever, have less reason to use it.

To be honest with you, seen through this lens, my career trajectory makes more sense too. I am not comfortable being an asshole, although I’m not going to claim I’ve never been one. I have certainly acted like a jerk in public a time or two in the past, especially when I was an architecture academic where a culture of vicious critique is quite normalised. But I’d rather collaborate than compete and I don’t like confrontation.

I have quality research publications and a good public profile for my scholarly work, yet I found it hard to get advancement in my previous institution. I wonder now if this is because I am too nice and, as a consequence, people tended to underestimate my intelligence. I think it’s no coincidence that my career has only taken off with this blog. The blog is a safe space for me to show off display my knowledge and expertise without having to get into a pissing match.

Like Sutton I am deeply uncomfortable with the observation that being an asshole can be advantageous for your career. Sutton takes a whole book to talk through the benefits of not being an asshole and I want to believe him. He clearly shows that there are real costs to organisations for putting up with asshole behaviour. Put simply, the nice clever people leave. I suspect this happens in academia all the time. It’s a vicious cycle which means people who are more comfortable being an asshole easily outnumber those who find this behaviour obnoxious.

Ultimately we are all diminished when clever people walk away from academia. So what can we do? It’s tempting to point the finger at senior academics for creating a poor workplace culture, but I’ve experienced this behaviour from people at all levels of the academic hierarchy. We need to work together to break the circle of nastiness.

It’s up to all of us to be aware that we have a potential bias in the way we judge others; to be aware that being clever comes in nice and nasty packages. I think we would all prefer, for the sake of a better workplace, that people tried to be nice rather than nasty when giving other people, especially students, criticism about their work. Criticism can be gently and firmly applied, it doesn’t have to be laced with vitriol.

It’s hard to do, but wherever possible we should work on creating circles of niceness. We can do this by being attentive to our own actions. Next time you have to talk in public about someone else’s work really listen to yourself. Are you picking up a prevailing culture of assholery?

I must admit I am at a bit of a loss for other things we can do to make academia a kinder place. Do you have any ideas?

Author: Jason Schmitt

Original: Huffington Post

The music business was killed by Napster; movie theaters were derailed by digital streaming; traditional magazines are in crisis mode–yet in this digital information wild west: academic journals and the publishers who own them are posting higher profits than nearly any sector of commerce.

Academic publisher Elsevier, which owns a majority of the prestigious academic journals, has higher operating profits than Apple. In 2013, Elsevier posted 39 percent profits, according to Heather Morrison, assistant professor at the University of Ottawa’s School of Information Studies in contrast to the 37 percent profit that Apple displayed.

This lucrative nature of academic publishing comes at a price–and that weight falls on the shoulders of the full higher education community which is already bearing the burden of significantly decreasing academic budgets. “A large research university will pay between $3-3.5 million a year in academic subscription fees –the majority of which goes to for-profit academic publishers,” says Sam Gershman, a postdoctoral fellow at MIT who assumes his post as an assistant professor at Harvard next year. In contrast to the exorbitant prices for access, the majority of academic journals are produced, reviewed, and edited on a volunteer basis by academics who take part in the tasks for tenure and promotion.

“Even the Harvard University Library, which is the richest university library in the world, sent out a letter to the faculty saying that they can no longer afford to pay for all the journal subscriptions,” says Gershman. While this current publishing environment is hard on large research institutions, it is wreaking havoc on small colleges and universities because these institutions cannot afford access to current academic information. This is clearly creating a problematic situation.

Paul Millette, director of the Griswold Library at Green Mountain College, a small 650 student environmental liberal arts college in Vermont, talks of the enormous pressures access to academic journals have placed on his library budgets. “The cost-of-living has increased at 1.5 percent per year yet the journals we subscribe to have consistent increases of 6 to 8 percent every year.” Millette says he cannot afford to keep up with the continual increases and the only way his library can afford access to journal content now is through bulk databases. Millette points out that database subscription seldom includes the most recent, current material and publishers purposefully have an embargo of one or two years to withhold the most current information so libraries still have a need to subscribe directly with the journals. “At a small college, that is what we just don’t have the money to do. All of our journal content is coming from the aggregated database packages–like a clearing house so to speak of journal titles,” says Millette.

“For Elsevier it is very hard to purchase specific journals–either you buy everything or you buy nothing,” says Vincent Lariviere, a professor at Université de Montréal. Lariviere finds that his university uses 20 percent of the journals they subscribe to and 80 percent are never downloaded. “The pricing scheme is such that if you subscribe to only 20 percent of the journals individually, it will cost you more money than taking everything. So people are stuck.”

Where To Go:

“Money should be taken out of academic publishing as much as possible. The money that is effectively being spent by universities and funding agencies on journal access could otherwise be spent on reducing tuition, supporting research, and all things that are more important than paying corporate publishers,” says Gershman. John Bohannon, a biologist and Science contributing correspondent, is in agreement and says, “Certainly a huge portion of today’s journals could and should be just free. There is no value added in going with the traditional model that was built on paper journals, with having people whose full time job was to deal with the journal, promote the journal and print the journal, and deal with librarians. All that can now be done essentially for free on the internet.”

Although the prior clearly sounds like the path toward the future, Bohannon says from his vantage point the prior is not one-size-fits-all: “The most important journals will always look pretty much like they do today because it is actually a really hard job.” Bohannon finds that the more broad journals such as Science, Nature, and Proceeding of the National Academy of Science (PNAS) will always need privatized funding to complete the broad publication tasks.

Another Option?

“A better approach to academic publishing is to cut out the whole notion of publishing. We don’t really need journals as traditionally conceived. The primary role of traditional journals is to provide peer review and for that you don’t need a physical journal–you just need an editorial board and an editorial process,” says Gershman.

Gershman lays out his vision for the future of academic publishing and says that a very different sort of publishing system would be that everybody could post papers to a pre-print server similar to the currently existing arXiv.org. After posting research, then the creator selects to submit it to a journal, which is essentially sending them the links to your paper on the pre-print server. The journal editorial board do the same editorial process that exists now–if your paper is accepted to their journal they can put their imprimatur on your paper saying it was accepted to this journal–but there is no actual journal–it is just a stamp of approval.

What Gershman’s concept does is remove most of the costs from the equation. The cost for running this pre-print server would be a shared cost for all universities and funding agencies and could clearly infuse millions upwards of billions back into the broad higher education system should an overarching system be implemented and respected. Bohannon is not convinced the prior is an easy sell. “We would need a real revolution. By revolution I mean a cultural revolution among academics. They would have to totally change the way they do business and, despite having the reputation of being revolutionary, academics are pretty conservative. As a culture, academia moves pretty slow.” Nathan Hall, professor at McGill University, follows Bohannon’s reasoning and says, “I think there is a sense of security in maintaining a set of agreements with known publishers with reputations like Wiley or Elsevier. I think universities aren’t quite aware of the benefits and logistics of a new system and they are comfortable maintaining existing relationships despite some questionability for what the publishers are providing.”

Open Access for the Future?

“The phrase ‘open access’ can mean several things,” says Lariviere. Open access on a broad scale refers to unrestricted online access for peer-reviewed research. Lariviere details how publishers have co-opted this terminology and in doing so perhaps increased profit further. “Elsevier says you can publish in open access, but in reality it means paying twice for the papers. They will ask me ‘do you want to publish your paper open access’ which means paying between $500 and $5,000 additional for that specific paper to be freely available to everyone. At the same time, they will not reduce the subscription cost to the overall journal, which means they are making twice the money on that specific paper. If you ask me if this type of open access is the way to go, the answer is no.”

Luckily large granting bodies have begun using their clout to push toward true open access. The National Institute of Health (NIH) has been a longstanding champion for creating open access. Since 2008, the NIH has had a mandate for all research funded by that body to be published open access. Recently, the Bill and Melinda Gates Foundation brought their clout into the open access conversation. Starting in January 2015 all work funded through the Gates Foundation will be open access and the foundation says: “We have adopted an Open Access policy that enables the unrestricted access and reuse of all peer-reviewed published research funded, in whole or in part, by the foundation, including any underlying data sets.”

As higher education is redefined to meet the needs and affordability required of the 21st century certainly the most basic functions of sharing academic research need to be retooled. There is no reason an academic publisher should have such a significantly different economic picture from standard publishers. The stark contrast is troubling as it tells just how far from reality our higher education system has traversed. Correspondingly, there is no reason universities should pay $3.5 million to have access to peer-reviewed data. This academic conversation is society’s conversation–and it is time that the digital revolution level one last playing field: because we, the people, deserve access.

Author: Shawna Wagman

Original: University Affairs (10/21/2015)

Since Nathan Hall introduced the world to Shit Academics Say in 2013, his humorous Twitter account has become one of the most popular related to academia, with nearly 140,000 followers. Dr. Hall, an associate professor in the department of education and counselling psychology at McGill, says his once anonymous Twitter persona (he outed himself, so to speak, in an article in the Chronicle of Higher Education in July) has woven itself into his life. His Twitter presence is part pastime, part social media experiment and a catalyst for his investigation into the subject of psychological well-being in academia. He recently spoke to University Affairs about his adventures.

University Affairs: How did you first get interested in Twitter?

Dr. Hall: At first I went on [Twitter] admittedly for some self-promotion, to share the research that I had been doing. People didn’t really seem to care for that. Then I saw these accounts – parody accounts, joke accounts and meme accounts – that were taking off fairly quickly and I was curious about how they were doing it. There was the well-known Shit My Dad Says account – that’s actually how I learned about Twitter and why I thought it might be interesting. Also, there had been a lot of anxiety for me getting ready for tenure. At the time I was feeling fairly burnt out and disillusioned and I actually wanted to see if people felt the same way I did. What I realized is that people online, on Twitter and other social media, were engaged in sharing things more widely, talking more candidly about issues. I felt like I was missing out.

UA: What did you think you were missing?

Dr. Hall: I realized that online, people were talking about the human issues related to the profession. I discovered this whole anonymous professors’ subculture on Twitter where they were connecting with each other over manuscript reviews and students. I started watching the accounts that were popular, like that of Raul Pacheco-Vega, watching what he was doing to connect scholars with each other. I was also watching meme accounts, like Research Ryan that got pretty popular. I had to google what the word “meme” meant to find out what it was. Then I attempted my own – Research Wahlberg (appropriating the image of American actor Mark Wahlberg) – on a dare to share a laugh and connect with people over issues that I thought I struggled with alone. Basically I settled on Wahlberg as an unconventional character trying to pass off statistics and research methods while being flirty or seductive, which to me was just a joke in itself. Eventually it got awkward looking at half-naked pictures of Mark Wahlberg when I was on the train during my commute to work.

UA: And from there you started your Twitter account, Shit Academics Say?

Dr. Hall: It was actually my wife who suggested I needed a new hobby. At that point I had done what I needed to do to get tenure and so I had a bit more time to think. I started the account to try to talk about the kinds of things faculty talk about when they are finished class, when they see other faculty in the hallway, when they go to the mailroom or chat with the staff or admin people. I wanted to tap into that, an after-hours approach to how faculty feel privately, on the weekends when they are on a date and feeling guilty about not writing, or when they are in a meeting with a student. It’s the self-talk that you hear yourself engaging in. I wasn’t that comfortable with that because I’m still relatively new to the profession, I’ve been working for about six years. What I realized about social media is that it’s more fun when more people are playing. I thought I would try to get more people involved and I thought humour might be a good way to do that.

UA: Why did you make the account anonymous?

Dr. Hall: I started off anonymously just to make sure my age, race, gender, discipline, none of that, would get in the way of the content. I think removing myself and my ego allowed the account to travel faster because the focus was on the people reading it and sharing things rather than on the person writing it. Given that I was trying to be funny, I think people gave me the benefit of the doubt. There were a lot of jokes that really weren’t very funny. People replied saying thank you for trying to share some laughs.

UA: What are some of the rules or parameters you set for yourself about how you engage on Twitter with this account?

Dr. Hall: Certain things I did to mimic other accounts, while other things I developed on my own. I learned that the best way to try to grow an account quickly is using implicit cues to convey authority. I don’t have questions, I have statements. There are no question marks, no exclamation points, no all-caps. I hoped that people would recognize that this was a little bit of a persona, a gag, a shtick, much like Kanye West refusing to smile in photos. There are other things I do that are pretty typical on Twitter to command authority: I don’t follow anyone, I don’t reply or retweet. It’s part of a branding strategy to get your account to grow more quickly.

I also engage in timeline grooming, which means I delete tweets that don’t hit very well with people – it makes everything you produce look more authoritative. People don’t realize you can delete tweets and that you can manage an online presence the way you would host a dinner party, in that you clean up ahead of time. People come to the account and they see a low number of tweets with a high number of followers and each tweet has a high number of retweets. All of this combines to make for an authoritative persona which I then counter with clear and honest heartfelt expressions of gratitude for people supporting the account.

UA: Let’s talk about being funny. How do you come up with the funny tweets? Have you discovered an academic sense of humour?

Dr. Hall: I’m not sure if there is an academic sense of humour. My sense of humour tends to be a bit dry, deadpan, a bit of a buzzkill type thing. To me it’s very funny if it makes people laugh at something they shouldn’t be laughing at. It’s more meta-level: I make jokes about making jokes; jokes about metaphors. The sequence of the tweets is sometimes a joke to me too: I post one thing that’s very funny and then one thing that’s hilarious and then one thing about how an academic quit his job, so it’s a rhythm and it actually builds momentum.

I tend to use idioms and proverbs. I like to take quotes that have been done before and just change the ending. For example, “If you don’t have anything nice to say, say it as a question.” Or “If you can’t explain it to a six-year-old, you don’t understand it yourself.” That’s a quote from Albert Einstein but I attribute it to “someone with limited experience trying to explain things to a six-year-old.” Some of these quotes, they sound very profound but when you try and incorporate them as useful life lessons in academia, they aren’t as useful as you might think.

UA: How do you incorporate tweeting into your life? Do you dedicate a certain amount of time each day to working up jokes, or do you wait for inspiration to strike?

Dr. Hall: I drop my daughter off at daycare and my son off at school and then I have a 47-minute train ride and an 18-minute walk to get up the hill to my office at McGill so some days I can be commuting for three hours. That’s why I ended up getting on Twitter in the first place. On the train you don’t have room for a laptop but you do have room for a cell phone to scan what people are writing and thinking. Other times throughout the day – waiting at the Starbucks’ drive-thru or waiting at my daughter’s ballet lesson, or while I’m on the treadmill – I use that time to read things on Twitter. I could also dictate text into my phone and release it later. I could actually crowd source in the sense that I could put something online, and people would comment back, which would give me more ideas about the kinds of things people wanted to joke about. It’s a bit of a weird juxtaposition because when I get notifications on my phone, I’m often in weird places. I’m outside Pharmaprix finding out that I’m being discussed on CBC radio, or coming out from watching Captain America to find out that the hashtag I started, #yomanuscript, was trending in Australia for some reason. The online Twitter celebrity juxtaposed with everyday family and academic life I find amusing. You’re changing a diaper and meanwhile on Twitter, people are calling you brilliant.

UA: What has Twitter celebrity done for you?

Dr. Hall: It really hasn’t done much. It’s just an opportunity to promote my work and give back. I’m very fortunate; a lot of people don’t have this. To receive tenure actually comes with a bit of privilege guilt. I have more time and I feel obligated to give back.

UA: Would you still stand behind your very first tweet: “Don’t become an academic”?

Dr. Hall: I am not sure. I was being sarcastic. I had become disillusioned from being on the tenure track and being burnt out after applying for grants that I didn’t need to try and demonstrate fundability, teaching classes that I didn’t have expertise in, and nominating myself for teaching awards in order to make sure I could keep my job. Everything on the Twitter account has a touch of sarcasm and often it has more than one meaning behind it.

UA: Tell me about the research that has come out of this social media experiment.

Dr. Hall: Since January of this year I’ve run three studies and recruited up to 9,000 people across almost 80 countries for research examining well-being and self-regulation in grad students and faculty. I’m looking at issues ranging from motivation and values, to procrastination, depression, work/life balance and coping strategies. I also look at hidden failure experiences that people don’t talk about. For example, I ask: How often do you have manuscripts rejected? Do you obtain sub-par teaching evaluations? Do you have grants rejected, or students leaving you as an advisor? It’s that hidden failure where you can walk around all day and not be able to talk about some of these things. I wanted to assess that.

I felt part of the reason faculty go online is because of the isolation. You encounter a lot of things you can’t explain to other people, the feelings of failure where you work for months and write tens of thousands of words to win a grant and then you get absolutely nothing – it’s all or nothing. It’s a different feeling of failure than a student getting below a cut-off on a test. It’s an outright absolute abject failure that you often don’t talk about. That’s where social media comes in and connects people to each other to share experiences, to talk candidly about these issues. I can explore these issues empirically now – I can look at self-regulation and what strategies are more effective for dealing with stress among different types of faculty in different countries. There aren’t really very many others who have used social media to this extent to allow this kind of research to be done. It is fun, it is engaging, and it’s a personal challenge to see how far I can go.

Author: Andrew Mahon

Original: McGill News (08/18/2015)

Nathan Hall’s sly and witty observations about academic life have attracted a large group of devoted Twitter followers

According to a tweet from Nathan Hall, there are two types of academics: those who use the Oxford comma, those who don’t and those who should. (Give yourself a minute to let that sink in.)

One could argue that there’s a third (fourth?) type of academic, the kind who shares sardonic and pithy nuggets of academic wit with some 130,000 Twitter followers in what has become a wildly successful social media experiment called Shit Academics Say. That third group would have a single member — Nathan Hall.

Hall, an associate professor in the Faculty of Education’s Department of Educational and Counselling Psychology, is the man behind more than 2,000 humourous observations about life in academia (including the one referenced in the first sentence of this article). A former elite-level Angry Birds gamer in search of a new hobby, he launched Shit Academics Say under the username @AcademicsSay in September 2013.

“I started a Twitter account as a hobby; one where I didn’t have to leave the couch,” he explains. “It also seemed like an interesting way to connect with people.”

The idea of a ‘shit [insert group name] say’ meme seemed like a good fit for addressing the quirks of the university world — even if the concept had been done before.

“Academics are usually two to five years behind popular culture,” he says. “I knew the meme was dated but also that academics would easily recognize it.”

But from the first tweet from this account (“Don’t become an academic”), it was clear that Shit Academics Say was not going to be your grandfather’s social media hobby. By simply making jokes about academic life, @AcademicsSay attracted its first 10,000 followers in five months and it was clear that Shit Academics Say had struck a chord with students and professors.

With an admittedly obsessive focus, Hall set his sights on Twitter world domination and employed painstaking research in his efforts to grow Shit Academics Say. He started applying growth hacking strategies, using images, colour, and punctuation strategically, pre-scheduling tweets, and posting in a timely manner (after Leonard Nimoy (Star Trek’s Spock) died: “Live long and publish”). He also monitored analytics and edited content for broader appeal and international audiences — in a bid to build up his audience base in Australia, for instance, Hall carefully determined the optimal times for tweeting to that continent. When famed American statistician and writer Nate Silver re-tweeted @AcademicsSay (“Data are.”), Hall knew he was on the right track.

“My objective was to get my numbers as high as possible,” he says, “both as a personal challenge and to test its usefulness for actual research.”

Analytics aside, Shit Academics Say works because Hall is adept at sharing the primal themes of academic life, drawing on his research and experience to tweet about procrastination, writing (or not writing), guilt, tenure (or lack thereof), engagement and other emotional highs and lows.

“Academics want to laugh and I needed a laugh too,” he says.

Beyond the humour and the skewering of academic life, Hall’s Twitter followers provide a unique resource for research in his work as director of McGill’s Achievement Motivation and Emotion research group. By soliciting followers of his Twitter account (and its Facebook version with 94,000 followers), Hall has recruited approximately 9,000 faculty and graduate students from almost 80 countries for online studies on topics ranging from procrastination and impostor syndrome to work-life balance and burnout.

“Shit Academics Say allowed me to reach a lot of people,” say Hall. “And I now have the opportunity to directly share the results of this research with others.”

Hall is now recognized as a bona fide social media authority. The Chronicle of Higher Education recently invited him to expound on how he developed @AcademicsSay, noting that Hall’s sly Twitter offerings have earned more attention on social media than the official Twitter accounts of such august universities as Harvard and Oxford. Hall has some advice for all those bi-monthly Twitter authors out there looking to take their tweets to the next level.

“It’s important to understand what the platform is and take advantage of its unique features,” he says. “Do some research, do something different and share real elements of your life. Share your insights, thank others for sharing, ask questions and, above all, have fun.”

I do my best proofreading after I hit send.

— Shit Academics Say (@AcademicsSay) June 30, 2015

Choose a discipline you love and you’ll never work a day in your life likely because that field isn’t hiring.

— Shit Academics Say (@AcademicsSay) June 27, 2015

I don’t suffer from overthinking, I enjoy it. Depending on how you define enjoy. And overthinking.

— Shit Academics Say (@AcademicsSay) June 25, 2015

I don’t make mistakes. I create teachable moments.

— Shit Academics Say (@AcademicsSay) June 12, 2015

It is better to have been cited incorrectly, than never to have been cited at all.

— Shit Academics Say (@AcademicsSay) June 11, 2015

Give a man a fish, he’ll eat for a day. Teach a man to use gender-neutral pronouns and he’ll feel uncomfortable with many popular metaphors.

— Shit Academics Say (@AcademicsSay) June 6, 2015

Friends don’t let friends read course evaluation comments.

— Shit Academics Say (@AcademicsSay) April 9, 2015

Twitter has actually helped to improve my writing as the brevity required to convey complex ideas in under 140 characters necessitates (1/3)

— Shit Academics Say (@AcademicsSay) March 18, 2015

I don’t know why it’s not showing. Yes, I reconnected the adapter. Can someone make sure the projector is on. What does this button do.

— Shit Academics Say (@AcademicsSay) January 12, 2015

You had me at “I read your most recent paper.”

— Shit Academics Say (@AcademicsSay) December 7, 2014